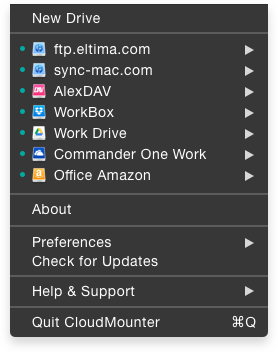

On a separate note, I would strongly recommend searching for “iDrive” on this, and other forums.*CloudMounter becomes Free and now natively supports Apple silicon!*ĬloudMounter is a centralized service that allows mounting cloud storages as local disks and work with online files the same way as with local ones. (there was a paper somewhere, I’ll link if I find it-each free user (read – data they bring) is worth around $40 to Google annually). These free services are very expensive, I’d say unaffordable to the vast majority of people. The solution is to stop using free services in general, and Google’s in particular.

It would be weird if they allowed to yank it back at a whim.ĭo I need to state the obvious? The solution here is not to engineering more workarounds. It’s obvious why-your data is what google bartered from you, in exchange for use of a free photo management software, it’s payment.

I’m talking about hundreds of thousands, in bulk. You can’t use rclone for that, because of the (wink wink) “a bug”, and you can’t do it via web interface either. Yes, you can use Takeout, but then go ahead, try to delete your photo library. What will be the preferred way to mount to google photos without loosing quality of images or metadata of GPS for instance?Īs indicated above, it’s impossible, but in no way unexpected: Google really wants you to keep your photos with them and makes it extremely difficult to take your data elsewhere. Is there any better option to mount google photos with nfs? I am not a aware of any client that would be running properly through linux or docker on arm64. 00:20:36.109 DEBUG LIST_ENTRIES Listing all/Ĭurrently I picked i drive just because of its price I have used their regular backup plan a decade ago and there were fine. 00:20:36.108 INFO SNAPSHOT_FILTER Loaded 0 include/exclude pattern(s) 00:20:36.108 DEBUG REGEX_DEBUG There are 0 compiled regular expressions stored 00:20:36.107 INFO SNAPSHOT_FILTER Parsing filter file /cache/localhost/1/.duplicacy/filters 00:20:36.107 INFO BACKUP_INDEXING Indexing /mnt/googlephotos.old/media 00:20:36.042 TRACE LIST_FILES Listing chunks/ 00:20:36.042 INFO BACKUP_LIST Listing all chunks 00:20:36.041 INFO INCOMPLETE_LOAD Previous incomlete backup contains 26354 files and 0 chunks 00:20:36.041 INFO BACKUP_START No previous backup found 00:20:35.974 TRACE SNAPSHOT_LIST_REVISIONS Listing revisions for snapshot media 00:20:35.974 TRACE SNAPSHOT_DOWNLOAD_LATEST Downloading latest revision for snapshot media 00:20:35.974 DEBUG BACKUP_PARAMETERS top: /mnt/googlephotos.old/media, quick: true, tag:

00:20:35.935 DEBUG STORAGE_NESTING Chunk read levels:, write level: 1 00:20:35.387 DEBUG PASSWORD_ENV_VAR Reading the environment variable DUPLICACY_MEDIA_S3_SECRET When I used the -d flag i saw the following on the log 00:20:35.387 DEBUG PASSWORD_ENV_VAR Reading the environment variable DUPLICACY_MEDIA_S3_ID I found that rclone copy works well so I am not sure if the rclone is the issue here Thanks for your reply, I am using docker on raspberry pi 4 8gb model 10:16:48.731 INFO SNAPSHOT_FILTER Loaded 0 include/exclude pattern(s) 10:16:48.731 INFO SNAPSHOT_FILTER Parsing filter file /root/.duplicacy-web/repositories/localhost/1/.duplicacy/filters 10:16:48.731 INFO BACKUP_INDEXING Indexing /root/googlephotos_new 10:16:48.665 INFO BACKUP_LIST Listing all chunks 10:16:48.665 INFO INCOMPLETE_LOAD Previous incomlete backup contains 193422 files and 0 chunks 10:16:47.970 INFO STORAGE_SET Storage set to 10:16:48.664 INFO BACKUP_START No previous backup found 10:16:47.970 INFO REPOSITORY_SET Repository set to /root/googlephotos_new Running backup command from /root/.duplicacy-web/repositories/localhost/1 to back up /root/googlephotos_new When i do it through duplicacy its still indexing and didnt start the backup - it works for hours When i do it via rclone only it works pretty well besides some (429 RESOURCE_EXHAUSTED) I tried to backup google photos to idrive

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed